A/B testing dynamic ads requires a different approach than traditional static ad testing because the content automatically changes based on user data and behavior. You need to test the underlying personalization logic and creative automation rules rather than individual ad variations. This involves setting up control groups, isolating variables within automated systems, and tracking performance across different personalization levels to optimize your dynamic creative campaigns.

What are dynamic ads and why do they need A/B testing?

Dynamic ads are automated advertisements that change their content, images, headlines, or calls to action based on user data, browsing behavior, or real-time information. Unlike static ads that show the same content to everyone, dynamic ads personalize the message for each viewer using data feeds, user profiles, and behavioral triggers.

Traditional A/B testing approaches don’t work effectively for dynamic content because you’re not testing fixed creative elements. Instead of comparing two static versions, you’re evaluating different personalization strategies and automation rules. The challenge lies in isolating variables when multiple elements change simultaneously based on user data.

Dynamic ad testing requires specialized methodologies because the content varies for each user interaction. You need to test whether your personalization logic actually improves performance compared to non-personalized alternatives. This means evaluating the effectiveness of your data-driven creative decisions rather than specific visual or copy elements.

The complexity increases when you consider that different audience segments may respond differently to personalization levels. What works for returning customers might not work for first-time visitors, requiring segmented testing approaches within your dynamic creative campaigns.

How do you set up A/B tests for ads that change automatically?

Setting up A/B tests for dynamic ads involves testing personalization strategies rather than individual creative elements. Create control groups that see non-personalized versions alongside test groups experiencing different levels of dynamic content personalization. This allows you to measure whether automation actually improves performance over static alternatives.

Start by establishing your testing framework around the personalization rules rather than creative variations. For example, test “high personalization” (using multiple data points like location, browsing history, and demographics) against “low personalization” (using only basic demographic data) and a static control group.

Variable isolation becomes crucial when multiple elements change automatically. Focus on testing one personalization strategy at a time, such as dynamic product recommendations versus dynamic pricing displays. Ensure your creative automation platform can segment audiences properly to maintain test integrity.

Design your testing framework to account for the data requirements of dynamic content. Ensure you have sufficient user data to trigger personalization rules and enough traffic volume to achieve statistical significance across different personalized experiences. Consider seasonal variations and data freshness when planning test duration.

Technical setup requires coordination between your ad platform and data sources. Implement proper tracking tags that can identify which personalization rules were triggered for each user, enabling accurate performance measurement across different automated creative variations.

What metrics should you track when A/B testing dynamic ads?

Track engagement rates across different personalization levels, measuring how users interact with highly personalized content versus less personalized or static alternatives. Key metrics include click-through rates, time spent viewing ads, and interaction rates with dynamic elements like personalized product carousels or location-specific offers.

Conversion tracking becomes more complex with dynamic ads because you need to measure performance across multiple personalization scenarios. Track conversion rates for different data-driven creative variations, measuring not just overall performance but how effectively each personalization strategy drives desired actions.

Creative automation testing requires specific metrics that traditional A/B testing might overlook. Monitor the frequency of personalization triggers, data utilization rates, and the percentage of users who see personalized versus fallback content when insufficient data is available.

Measure the effectiveness of your automated creative variations by tracking metrics like relevance scores, ad recall, and user sentiment across different personalization levels. This helps determine whether increased personalization actually improves user experience or creates ad fatigue.

Consider long-term metrics beyond immediate conversions, such as customer lifetime value and retention rates for users exposed to different personalization strategies. Dynamic ads often impact user relationships differently than static campaigns, making these extended metrics particularly valuable for campaign optimization.

How do you analyze A/B test results for personalized ad campaigns?

Analyze dynamic ad test results by examining performance across audience segments and personalization levels rather than focusing solely on overall campaign metrics. Look for patterns in how different user groups respond to various personalization strategies, identifying which data points drive the most effective creative automation.

Statistical significance becomes more complex with multiple variables and audience segments. Use segmented analysis to understand which personalization approaches work best for specific user types, geographic locations, or behavioral patterns. Avoid drawing conclusions from aggregated data that might mask important segment-specific insights.

Identify winning personalization strategies by comparing performance metrics across different automation rules and data utilization levels. Focus on understanding why certain personalized ad testing approaches succeed rather than just which ones perform better numerically.

Consider the interaction effects between different personalization elements when interpreting results. Dynamic content often combines multiple data points, so analyze how different combinations of personalized elements impact overall campaign performance and user engagement.

Document your findings in a way that informs future dynamic creative campaigns. Create guidelines for when to use specific personalization strategies based on audience characteristics, campaign objectives, and available data quality. This builds institutional knowledge for more effective creative automation testing over time.

Understanding how to effectively test dynamic ads helps marketers optimize their automated creative campaigns and improve ad performance optimization through data-driven personalization strategies. The key lies in testing the logic behind the automation rather than individual creative elements, ensuring your dynamic content truly enhances user experience and campaign effectiveness.

How Storyteq helps with dynamic ad testing

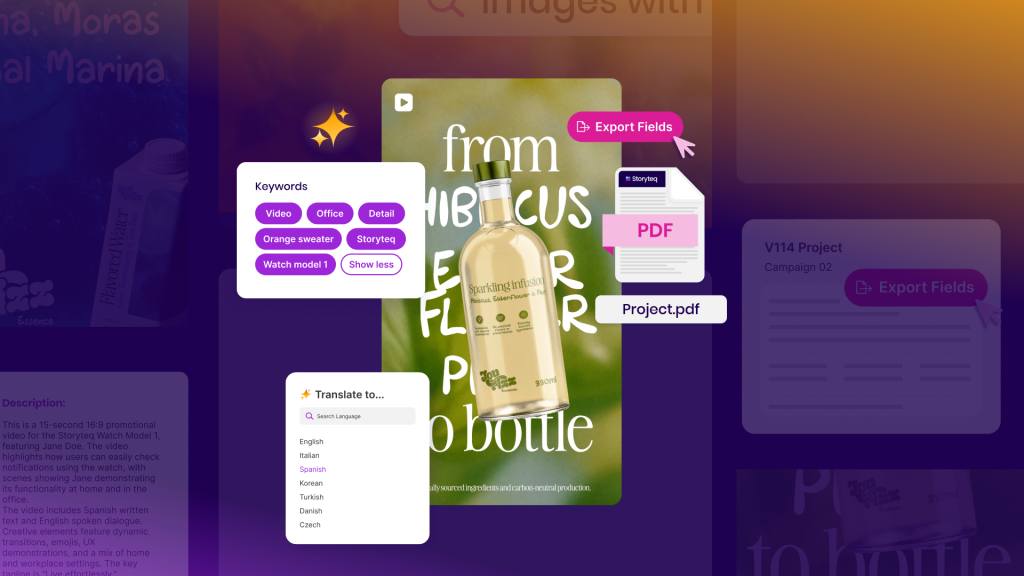

Storyteq provides a comprehensive creative automation platform that simplifies A/B testing for dynamic advertising campaigns. The platform enables marketers to test personalization strategies effectively while maintaining full control over their creative automation rules and data-driven content variations.

Key features that support dynamic ad testing include:

• Advanced audience segmentation tools that enable proper control group creation and variable isolation

• Real-time performance tracking across different personalization levels and automation rules

• Integrated analytics dashboard that measures creative automation effectiveness and user engagement metrics

• Data management capabilities that ensure consistent personalization triggers and fallback content delivery

• Testing framework that supports both simple A/B comparisons and complex multivariate personalization strategies

Ready to optimize your dynamic advertising campaigns through effective A/B testing? Discover how Storyteq’s creative automation platform can streamline your personalized ad testing process and improve campaign performance across all your dynamic creative initiatives.