A/B testing automated creatives requires different strategies than traditional manual testing approaches. While the fundamental principles remain the same, automated creative testing operates at a much greater scale and speed, allowing you to test thousands of variations simultaneously rather than just a few. This comprehensive approach to automated creative testing helps you optimise performance across multiple variables, formats, and audience segments while maintaining statistical significance and actionable insights.

What makes A/B testing automated creatives different from traditional testing?

Automated creative A/B testing differs from traditional methods primarily through scale, speed, and complexity management. Instead of manually creating 5–10 variations, automated systems can generate thousands of creative combinations by mixing different elements like headlines, images, colours, and calls to action. This allows for comprehensive testing across multiple variables simultaneously.

The real advantage lies in real-time optimisation capabilities. Traditional testing requires you to wait for statistical significance before making changes, often taking weeks. Automated systems continuously adjust and learn from performance data, shifting budget towards winning variations while testing new combinations in the background.

However, this creates unique challenges. Testing machine-generated content variations means you’re dealing with exponentially more data points and potential combinations. You need robust systems to track performance across thousands of variants while ensuring each test maintains statistical validity. The complexity requires different analytical approaches and more sophisticated attribution models to understand which specific elements drive performance improvements.

How do you set up effective A/B tests for automated creative campaigns?

Setting up automated creative tests requires systematic planning around variable selection, audience segmentation, and testing frameworks. Start by identifying your primary testing variables – typically headline variations, visual elements, colour schemes, and call-to-action buttons. Limit your initial tests to 3–4 variable categories to maintain manageable complexity while gathering meaningful insights.

Audience segmentation becomes more important in automated testing because you’re running multiple variations simultaneously. Create distinct audience segments based on demographics, behaviour, or funnel stage to prevent overlap and ensure clean test results. Each segment should be sufficiently large to reach statistical significance – typically requiring at least 1,000 users per variation for reliable results.

Timeline planning differs significantly from manual testing. While traditional A/B tests might run for 2–4 weeks, automated creative testing operates on shorter cycles with continuous optimisation. Plan for 7–14 day test cycles with daily performance monitoring. This allows the system to identify winning combinations quickly while maintaining enough runtime for statistical confidence.

Sample size calculations become more complex with multiple variables. Use power analysis tools that account for your expected effect size, desired confidence level, and the number of variations being tested. Remember that testing more variables requires larger sample sizes to maintain statistical power across all combinations.

Which metrics actually matter when testing automated creatives?

Focus on conversion-driven metrics rather than vanity metrics when evaluating automated creative performance. Click-through rates and impressions provide surface-level insights, but conversion rate, cost per acquisition, and return on ad spend reveal which creative elements actually drive business results. These metrics help you understand the full customer journey impact of your creative variations.

Primary metrics should align with your campaign objectives. For awareness campaigns, focus on reach, impression share, and video completion rates. For conversion-focused campaigns, prioritise conversion rate, cost per conversion, and lifetime value metrics. Track these consistently across all creative variations to identify patterns and winning elements.

Creative performance testing requires secondary metrics that reveal engagement quality. Time spent viewing ads, social engagement rates, and bounce rates after clicking provide insights into creative resonance beyond basic conversion metrics. These help identify creatives that not only convert but also build positive brand associations.

Attribution becomes complex with multiple creative variations running simultaneously. Implement view-through conversion tracking and multi-touch attribution models to understand how different creative elements contribute to the conversion path. This prevents you from optimising for last-click conversions while missing the broader impact of your creative strategy.

What are the most common mistakes in automated creative A/B testing?

The most frequent mistake involves testing too many variables simultaneously without proper statistical planning. While automation enables testing thousands of combinations, this often leads to statistical noise rather than actionable insights. Each additional variable exponentially increases the sample size needed for reliable results, potentially diluting your findings across too many variations.

Insufficient test duration represents another critical error. Many marketers stop tests too early when they see promising initial results, before reaching statistical significance. Automated ad testing requires patience – even with faster optimisation cycles, you need adequate data to distinguish between genuine performance differences and random variation.

Poor audience segmentation creates overlapping test groups that contaminate results. When the same users see multiple creative variations, or when audience segments aren’t properly isolated, you cannot accurately attribute performance differences to specific creative elements. This leads to false conclusions about which elements actually drive results.

Misinterpreting statistical significance in automated environments causes many optimisation mistakes. Just because one variation shows higher performance doesn’t mean it’s statistically significant. Automated systems generate large amounts of performance data, increasing the likelihood of finding false positives. Always verify that performance differences exceed your predetermined confidence thresholds before making optimisation decisions.

Successful automated creative testing requires balancing scale advantages with statistical rigour. By avoiding these common pitfalls and focusing on meaningful metrics, you can leverage automation to improve creative performance while maintaining reliable, actionable insights. The key lies in systematic planning, proper statistical frameworks, and the patience to let tests reach meaningful conclusions.

How Storyteq helps with automated creative A/B testing

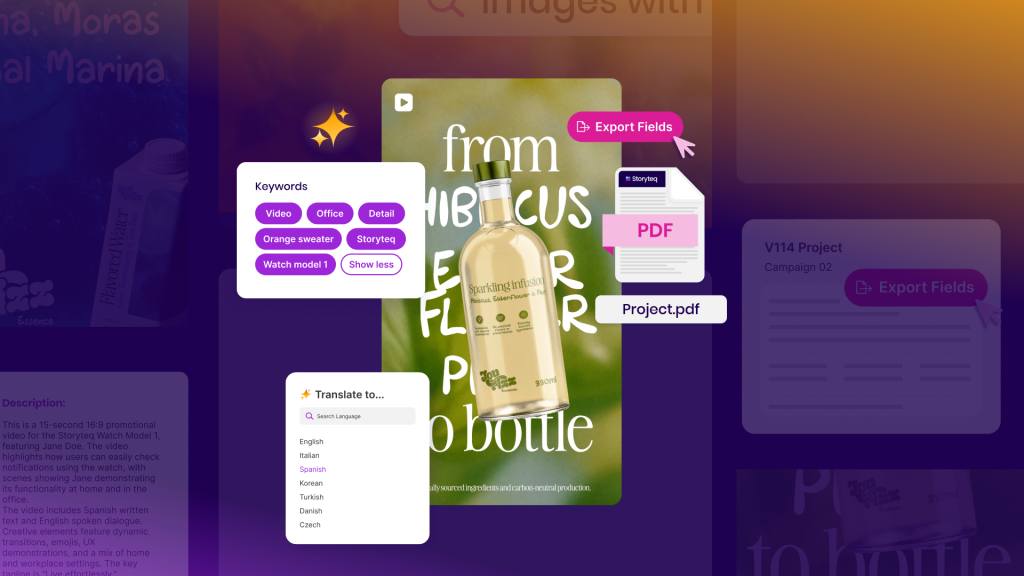

Storyteq provides a comprehensive solution for automated creative A/B testing that eliminates the common challenges brands face when scaling their testing programs. Our Creative Automation Platform enables you to:

- Generate unlimited creative variations while maintaining brand consistency through template-based systems

- Implement proper statistical frameworks with built-in significance testing and sample size calculations

- Track performance across thousands of variants with sophisticated attribution models

- Automate audience segmentation to prevent test contamination and ensure clean results

- Monitor real-time performance with daily optimisation insights and automated budget allocation

Book a demo to discover how we can help you implement effective A/B testing for your automated creative campaigns.

Frequently Asked Questions

How long should I wait before making changes to underperforming automated creative variations?

Wait at least 7-14 days and ensure you've reached statistical significance before making changes. Even if a variation appears to be underperforming initially, it needs sufficient data to confirm this isn't due to random variation. Check that you have at least 1,000 interactions per variation and that the performance difference exceeds your predetermined confidence threshold (typically 95%) before pausing or modifying creative elements.

What's the minimum budget required to run effective automated creative A/B tests?

You need sufficient budget to generate at least 1,000 interactions per creative variation within your test timeframe. For testing 4-5 variations, this typically requires a minimum daily budget of $100-200, depending on your industry's average cost per click. If you're testing across multiple audience segments, multiply this by the number of segments to ensure each gets adequate exposure for reliable results.

Can I test automated creatives across different platforms simultaneously, or should I test each platform separately?

Test each platform separately initially, as creative performance varies significantly between platforms due to different user behaviors, ad formats, and algorithms. Once you identify winning creative elements on each platform, you can run cross-platform tests to understand broader creative principles. This approach prevents platform-specific factors from skewing your results and provides cleaner insights into creative effectiveness.

How do I handle seasonal variations or external events that might affect my automated creative test results?

Monitor external factors closely and be prepared to pause or restart tests if significant events occur during your testing period. Major holidays, news events, or competitor campaigns can skew results. If external factors impact your test midway, either extend the testing period to account for the variation or restart with a clean dataset. Document these events to help interpret results and inform future testing strategies.

What should I do if my automated creative variations are all performing similarly with no clear winner?

Similar performance across variations often indicates you're testing elements that don't significantly impact user behavior, or your sample size isn't large enough to detect meaningful differences. Try testing more dramatically different creative elements (completely different value propositions, visual styles, or messaging approaches) or extend your test duration. Sometimes, 'no clear winner' is valuable data indicating your current creative approach is already well-optimized.

How can I ensure my automated creative tests don't negatively impact brand consistency?

Establish clear brand guidelines and creative boundaries before launching automated tests. Define approved color palettes, fonts, messaging tones, and visual elements that all variations must adhere to. Use template-based systems that allow testing within brand parameters rather than completely unrestricted generation. Regular creative audits and approval workflows help maintain brand integrity while still enabling meaningful performance optimization.